The Evolution of DevOps | Development and Operations Come Together

Ancient History (Operations)

The start of my career in technology was in operations. My first job in technology was as a third shift Systems Operator. My job consisted of “running jobs” (another way of saying running a program that either produced some printed output or moved some data around), backing up the system, and printing/distributing reports. These were all basic tasks, but necessary at the time. As my career progressed, I learned more about operations and was promoted to Systems Manager.

As a Systems Manager, I was responsible for keeping the system up to date and performing well under the user load during peak times. I monitored the performance of the system and user response times. I also tuned and re-indexed the database to ensure it provided peak performance and short response times for the queries required for user transactions and reporting. It was my job to ensure the system was always available and that we could recover from a disaster quickly. I implemented new technology such as mirroring the database and file system between two systems to provide load balancing and high availability as well as on-line backups.

“The System” was called a minicomputer at the time, but it took up an entire room. It was an HP3000 980/100. The 100 in the model’s name designated that the system had a single processor. At first, the one system supported all the users including sales, accounting, and the warehouse (pick-pack, shipping, and receiving). I think there were probably around sixty people using the system at first. The system handled all the processing for these users as they connected using dumb terminals without any processing power.

As the company grew so did the load on the system. New employees were added, and the system needed to scale to meet the new load. The company upgraded to a 980/200 (two processors) and then to a 980/400 (four processors) to keep up with the load. When we could no longer scale vertically, we clustered multiple systems together through file system and database mirroring. We could then balance the load across multiple systems (in this case we had two 980/400s). This offered the side benefits of on-line backup (backing up one system while users were on the other) and failover (if one system failed you could still use the other).

Operations also kept all the software up to date. Software updates were no small undertaking. It typically meant taking the system down for some time, often hours in the middle of the night while the system was normally down for backup, and then testing everything when the update was complete. Software consisted of more than just one main application which ran the business. We had to keep the monitoring software, the database tuning software, the database replication software, the job scheduling software, and the operating system up to date as well.

The Information Technology (IT) department handled all this work. Some companies called this Computer Operations. We had a team of around 4 Systems Operators, 2 systems managers, 3 programmers, and the Director. The programmers basically made configuration changes to the main application and assisted the users in creating new reports not offered by the main application.

This was operations back in the day. It’s changed a lot over the years, but the responsibilities of the people involved in operations are still very similar. Most companies don’t own servers anymore. It’s all in the cloud nowadays. To some extent, companies still need to manage these cloud servers in much the same way as we did way back when.

Slightly More Recent History (Development)

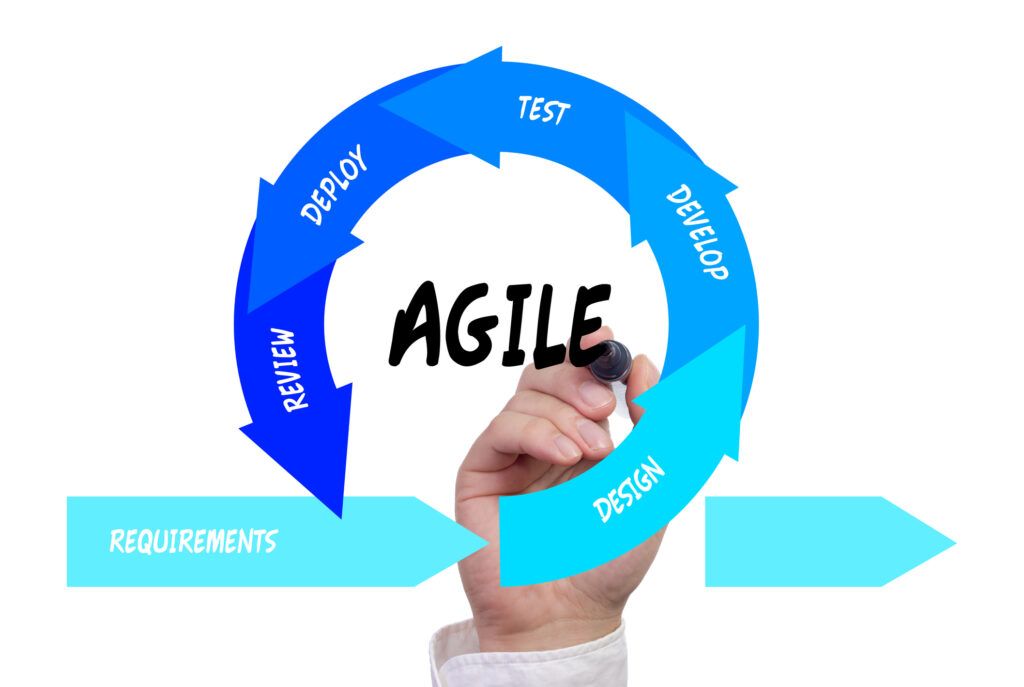

After working in operations for a few years, I landed a new job at a software development company. My start in this new company was as a Support Engineer but I progressed through several positions including Support Manager, Product Manager, Pre-Sales Engineer, Professional Services (Implementations). During this time, and especially my time as a product manager, I learned about the Software Development Life Cycle (SDLC). I’ve written an article here about different development methodologies, but in short, it goes something like this…

- Plan – this is requirements gathering and writing specifications or stories

- Design – wireframes or sketches of the screens (UX/UI) and design to make it look great

- Development – the actual coding part which includes building or compiling the application

- Test – QA to make sure it works as expected

- Deploy – allow users to actually use it

Software development organizations typically employ product managers, project managers, UX/UI specialists, designers, developers (programmers), and QA engineers. The developers typically help with the deployment. Some companies do their own software development in-house (like Facebook or Google). Others hire companies like Saritasa to do the development for them. Some licensed software from other companies that develop it (like Microsoft or Oracle).

Two Worlds

There have always been two groups of professionals involved with application technology: Software Development and Operations. In some cases, both groups work for the same company, like Facebook and Google, where they develop their own software, host the software, and support the end-users of their applications. In other examples, one company might develop the software for another or license the software to multiple companies. The companies using the software maintain the hosting environment and supporting applications (traditional operations).

Sometimes worlds collide or conflict and these two groups have trouble getting along. Software developers might not consider the hosting environment when writing the application. Operations might not fully understand the hosting requirements and how to best scale the software to support their type of use and load. Software updates may not be simple and easy to deploy. Updates may require the two groups to work closely together, which can be difficult if they are from two different cultures.

If only we could all just get along and work as one team…

DevOps

A new methodology has emerged that combines these two worlds into one, hence the name Dev/Ops (development and operations). You can learn more about its history and meaning on the Wikipedia page, for a high-level explanation read on…

In my view, Dev/Ops is a bit of an extension to the deploy phase of the SDLC described earlier. With Dev/Ops it looks more like:

- Coding

- Building

- Testing

- Packaging

- Deploying

- Configuring

- Monitoring

As you can see most of the old SDLC takes place before the Coding, Building, and Testing phases above. Everything after Testing is traditional operations. The idea is to have a cohesive team and toolset for all these processes to flow easily from one to another. Instead of having two teams (dev and ops) you have one team that works together. Developers consider how the application will need to be packaged and released while they code. Toolsets standardize and automate the process of packaging and deploying the application to a cloud environment. Technologies such as containerization help standardize the environments in which the application will run.

There are still separate positions and responsibilities within a DevOps organization. Pure Software developers focus more on the code of the application itself. DevOps engineers focus on the packaging, deployment, and day-to-day care and feeding of the application. Therefore, software developers no longer need to spend cycles copying files over to production or figuring out how to deploy a new release. Instead, the DevOps engineers take care of it and the developers can focus on coding the application.

There are many benefits to the Dev/Ops methodology including:

- Improve application stability (end users experience fewer bugs)

- Improved software performance (end users have a better more responsive experience)

- Reliable Infrastructure (the infrastructure required to support an application is considered and included when the application is developed and deployed, also services can be set up to be redundant and even self-healing)

- Faster Deployment (because of packaging and automation releasing new versions of an application can be done very quickly with little effort)

- Faster Problem Solving and Recovery (when issues do arise, they can be resolved quickly due to cloud-native monitoring tools)

- Offloading pure development resources (allowing them to focus on development and not deployment and maintenance)

- Fewer human mistakes as packaging/deploying processes are automated

We Can Help

Saritasa has put together a robust toolset and workflows that we use for DevOps. We invested a significant amount of time into our ability to provide this service to our clients. Our toolset utilizes a lot of what Amazon Web Services (AWS) and Kubernetes have to offer. Our methodologies take advantage of these services to deploy applications quickly (with high-quality yet low effort). Production environments are elastically scalable, robust, resilient, and reliable. We support our clients from the start of the cycle (requirements gathering) all the way through to deployment and daily monitoring and maintenance.

Saritasa also offers DevOps support as a separate service. We can help your get the most out of your production environment or set up a new environment using our toolset to improve all aspects of your application’s performance and reliability.

Recommended for You

Check out related insights from the team

Get empowered, subscribe today

Receive industry insights, tips, and advice from Saritasa.